Convergence analysis

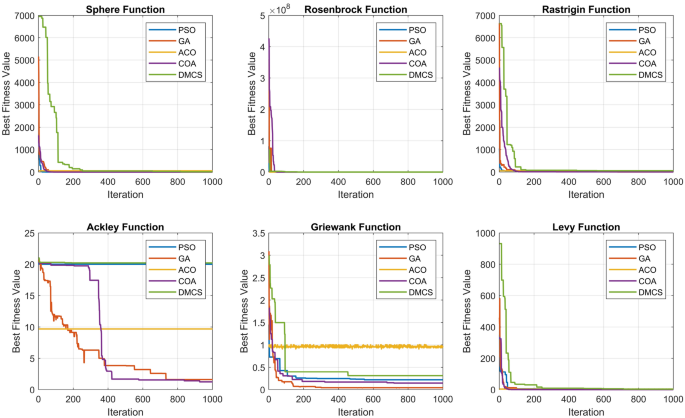

This section evaluates the performance of the DMCS algorithm, particularly focusing on its convergence behavior across various classical benchmark functions. The enhanced mutation strategy implemented in DMCS is examined through its impact on the convergence rates, depicted in the convergence plots (see Fig. 13).

The convergence analysis demonstrates that DMCS, while integrating an enhanced mutation strategy, exhibits varied performance across different test functions. For instance, in the Sphere function, the DMCS algorithm shows rapid convergence towards the global optimum, outperforming other algorithms such as PSO and ACO in early iterations. This indicates a robust search capability in smoother landscapes, where the risk of local minima is minimal39,40.

In contrast, the Rosenbrock and Rastrigin functions, known for their deceptive and complex landscapes, presented challenges. The DMCS algorithm initially lagged behind algorithms like GA and ACO but eventually reached competitive fitness values towards the later stages of the iterations. This behavior underscores the strength of the enhanced mutation strategy in escaping local optima and exploring the search space more thoroughly41,42.

Particularly notable is the performance on the Griewank and Levy functions, where DMCS demonstrated significant improvements in convergence speed and stability. The algorithm effectively balanced exploration and exploitation phases, as evidenced by its steady and consistent convergence curves. This is a testament to the efficacy of the enhanced mutation strategy in managing the exploration-exploitation trade-off, which is critical in multimodal and high-dimensional search spaces43.

However, the analysis also highlights some limitations. For example, in the Ackley function, where the landscape features numerous local minima, the DMCS algorithm showed slower convergence relative to more specialized algorithms like GA, which could navigate such complex terrains more effectively. This suggests that while the enhanced mutation strategy improves diversity and search breadth, it may require further tuning to optimize performance in highly rugged and multimodal landscapes.

Overall, the convergence plots (Fig. 10) illustrate that the DMCS algorithm, equipped with the enhanced mutation strategy, offers a competitive and reliable option for tackling a broad range of optimization problems. The results encourage further refinements and adaptations of the mutation parameters based on the problem’s specific characteristics to optimize both convergence rates and solution accuracy.

In summary, the DMCS algorithm represents a promising approach in the field of evolutionary computation, with its enhanced mutation strategy proving to be particularly beneficial in diverse and challenging benchmark scenarios. Future work will focus on adaptive mechanisms to further refine this strategy, aiming to achieve optimal performance across all types of benchmark functions.

Convergence plots illustrating the optimization progress of DMCS compared to other conventional algorithms across different test functions. The DMCS algorithm shows varying levels of performance, with notable improvements in simpler test functions and challenges in more complex scenarios.

DCMS algorithm response analysis

The DMCS algorithm’s response analysis through trajectories in the first dimension provides a comprehensive insight into its behavior under various test conditions. As illustrated in Fig. 14, the trajectory plots for functions like Sphere, Rosenbrock, Rastrigin, Ackley, Griewank, and Levy demonstrate distinct patterns of agents’ movements across iterations. These plots serve as a qualitative metric for understanding the algorithm’s exploration and exploitation dynamics in both unimodal and multimodal function landscapes41.

Trajectory analysis of DMCS algorithm showing agent movements in the first dimension across different test functions.

The trajectory analysis of the Sphere function reveals rapid convergence towards optimal regions, indicating efficient exploitation capabilities of DMCS. Conversely, in the Rastrigin function, the trajectories spread out across the search space, reflecting the algorithm’s ability to explore extensively, crucial for avoiding local minima in complex multimodal landscapes.

The Rosenbrock and Ackley function trajectories, however, exhibit periodic and somewhat erratic movements, suggesting challenges in navigating the functions’ deceptive gradients and flat regions. This behavior underscores the algorithm’s responsiveness to the function’s topological challenges, adapting its search strategy between exploration and exploitation as needed.

Moreover, the trajectory plots for the Griewank and Levy functions highlight the algorithm’s sustained exploration efforts, with occasional convergence spikes indicating successful local optima identification followed by diversification. This pattern is crucial for problems where the search space contains numerous local optima, and premature convergence could lead to suboptimal solutions43.

In conclusion, the DMCS algorithm demonstrates a balanced approach to handling diverse function landscapes, adapting its mutation and crossover strategies dynamically to optimize both exploration and exploitation. Future iterations of this algorithm could focus on enhancing adaptive mechanisms to further refine this balance, potentially incorporating machine learning techniques to predict and react to the complexities of the search landscape dynamically. This ongoing adaptation is expected to improve the robustness and efficiency of the DMCS algorithm, making it a strong candidate for solving complex optimization problems across various real-world applications.

Comparison of standard DMCS and enhanced mutated DMCS

The evaluation of the DMCS algorithm and its enhanced version employing a mutation strategy (Enhanced Mutated DMCS) showcases distinct performance traits across various benchmark functions. This comparative analysis, illustrated through convergence plots (see Figures 15 and 16), highlights the efficacy and adaptability of the mutation strategy in handling complex optimization landscapes.

The DMCS algorithm, depicted in Fig. 15, demonstrates consistent performance across functions like Sphere and Ackley, where it swiftly converges to the global optimum. This rapid convergence indicates a strong exploitation capability within relatively simple landscapes. However, for more complex functions like Rosenbrock and Rastrigin, DMCS shows delayed convergence, suggesting challenges in navigating rugged multimodal terrains44,45.

In contrast, the Enhanced Mutated DMCS, shown in Fig. 16, reveals an improved trajectory in these complex functions. The mutation strategy introduces variability in the population, thereby preventing premature convergence and encouraging thorough exploration of the search space. This is particularly evident in the Rosenbrock and Rastrigin functions, where Enhanced Mutated DMCS not only converges faster but also maintains a stable trajectory towards the optimum46,47.

These observations are substantiated by the trajectory plots from various functions (see Fig. 17), where the first dimension’s movement across iterations is plotted for both versions of the algorithm. The trajectory plots for Enhanced Mutated DMCS display more dynamic and varied paths in the early iterations, which gradually stabilize as the algorithm converges. This behavior underscores the mutation strategy’s role in enhancing exploratory actions without compromising the convergence speed9,48.

The convergence curves for the Sphere and Levy functions further exemplify the robustness of the Enhanced Mutated DMCS. Unlike the standard DMCS, the enhanced version achieves lower fitness values quicker and with fewer fluctuations, indicating an effective balance between exploration and exploitation49,50,51.

In conclusion, the introduction of an enhanced mutation strategy in DMCS significantly boosts its performance, especially in dealing with complex and deceptive landscapes. Future studies should focus on refining these strategies to optimize their effectiveness across a broader range of functions, potentially incorporating adaptive mutation rates based on real-time feedback from the search process.

Convergence plots for the standard DMCS algorithm across various benchmark functions.

Convergence plots for the Enhanced Mutated DMCS algorithm across various benchmark functions.

Trajectory plots showing the position in the first dimension across iterations for both the standard and Enhanced Mutated DMCS algorithms.

DMCS validation for task scheduling

The DMCS method is assessed to evaluate its efficacy in managing task scheduling within a computational environment consisting of fog and cloud nodes. This approach is contrasted against four established algorithms: PSO, Genetic Algorithm (GA), ACO, and Cuckoo Optimization Algorithm (COA). The performance of DMCS is evaluated based on key metrics such as makespan, total cost, energy consumption, and the percentage of tasks meeting their deadlines.

DMCS involves scheduling a diverse set of tasks across a network of fog and cloud nodes, adjusting for a variety of experimental conditions. Each experiment varies in terms of the number of tasks, iterations, and population size, guided by the specifics of the algorithm’s requirements. These experiments are meticulously recorded in Tables 5, 6, and 7 , which detail the task and node characteristics, providing a comprehensive overview of the experimental setups.

The network’s bandwidth and latency significantly influence the scheduling outcomes. It is assumed that all fog nodes operate at a uniform bandwidth level, allowing for consistent data transmission speeds across the network. Enhancements in network bandwidth are shown to reduce transmission delays, thereby improving the overall efficiency of task scheduling. For instance, increasing the bandwidth from 10,000 to 20,000 Kbps markedly reduces the delay, facilitating faster data migration to cloud-based data centers, which optimizes network utilization.

Incorporating advanced communication technologies allows for effective offloading of tasks to proximal systems equipped with appropriate middleware. This is particularly beneficial in environments characterized by high-speed internet standards such as IEEE 802.11ac, and emerging mobile broadband technologies like LTE and future 5G networks, which promise significantly enhanced data rates.

The series of experiments conducted are designed to explore the impact of varying numbers of tasks, the capacity of fog and cloud nodes, and different scheduling algorithms under controlled settings. For example:—The number of tasks tested ranges from 100 to 700, adjusting the number of fog and cloud nodes accordingly to measure the impact on scheduling performance.—Specific experiments focus on the influence of fog and cloud node variability, iterations ranging from 50 to 250, and a population size scaling from 50 to 150.

The experimental configurations are summarized in the tables below, providing clarity on the parameters used in the study:

This examination provides valuable insights into the adaptability and robustness of the DMCS approach, demonstrating its potential to enhance task scheduling performance across a variety of network configurations and operational conditions in a fog-cloud computing environment.

Impact of number of tasks

In evaluating the effectiveness of the DMCS method, we compare the DMCS algorithm with other well-established algorithms such as PSO, GA, ACO, and COA. The comparisons are based on several key performance metrics, including makespan, total cost, energy consumption, and the percentage of deadlines met by the tasks (DST%).

The analysis involves conducting experiments with varying numbers of tasks to observe how the increase in tasks affects the system’s performance. These experiments are systematically set with different numbers of tasks ranging from 100 to 700, under fixed conditions of fog and cloud nodes, as detailed in Table 8. The results of these experiments are crucial as they help in understanding the scalability and efficiency of the DMCS when compared to conventional methods.

With the increase in the number of tasks, the load on the system naturally increases, which, in turn, affects various performance metrics. It is observed that as the number of tasks increases, there is a significant impact on the system’s makespan, cost, and energy consumption, all of which tend to increase. The makespan, representing the total time taken to execute all tasks, is a critical factor since its minimization is crucial for enhancing the system’s efficiency and reducing energy consumption.

A comparative analysis shown in Fig. 18 illustrates the performance of DMCS against other algorithms. From the figure, it is evident that while DMCS maintains competitive performance across varying numbers of tasks, the COA method yields lower makespan and total cost values compared to DMCS in most cases.

Performance comparison of DMCS with PSO, GA, ACO, and COA across varying numbers of tasks, showcasing metrics of makespan, cost, energy consumption, and DST%.

The observation that COA achieves lower makespan and total cost values compared to DMCS in most cases is significant and warrants a detailed analysis. COA’s performance can be attributed to its strong exploitation capabilities, which enable it to converge quickly towards optimal solutions in terms of makespan and cost. The Lévy flight mechanism in COA allows for efficient exploration of the search space, potentially leading to better task assignments that minimize execution time and operational costs.

However, it is important to consider other critical performance metrics, such as energy consumption and DST%, to fully assess the efficiency of a scheduling algorithm in fog-cloud environments. In our experiments, DMCS consistently achieves lower energy consumption compared to COA, as shown in Table 8. Additionally, both DMCS and COA maintain a 100% deadline satisfaction rate.

The differences in performance highlight the trade-offs inherent in multi-objective optimization:

-

Makespan and Total Cost: COA excels in minimizing makespan and total cost due to its aggressive search strategy. This is beneficial in scenarios where rapid task completion and cost reduction are the primary objectives.

-

Energy Consumption: DMCS outperforms COA in terms of energy efficiency. By incorporating energy consumption into its optimization criteria, DMCS effectively balances the load across nodes to reduce overall energy usage.

-

Deadline Satisfaction (DST%): Both algorithms maintain a 100% DST%, indicating their effectiveness in meeting task deadlines.

While COA achieves lower makespan and cost, DMCS offers several advantages:

-

1.

Balanced Optimization: DMCS is designed to optimize multiple objectives simultaneously, providing a more balanced solution that considers makespan, cost, energy consumption, and deadline adherence.

-

2.

Energy Efficiency: In environments where energy consumption is a critical concern-such as in fog computing with limited energy resources-DMCS’s ability to minimize energy usage is a significant advantage.

-

3.

Scalability: DMCS demonstrates robust performance as the number of tasks increases, maintaining lower energy consumption without a significant increase in makespan or cost.

-

4.

Adaptability: The multi-criteria nature of DMCS allows it to adapt to different priorities based on the specific requirements of the system or application.

In practical applications, the choice between COA and DMCS may depend on the specific priorities and constraints of the deployment environment:

-

When to Prefer COA: If the primary objectives are to minimize makespan and total cost, and energy consumption is less of a concern, COA may be the preferred choice.

-

When to Prefer DMCS: If energy efficiency is critical-due to environmental concerns or operational constraints-and a balanced optimization across multiple metrics is desired, DMCS offers a more suitable solution.

The in-depth analysis reveals that while COA demonstrates strong performance in minimizing makespan and cost, DMCS provides a more holistic optimization that includes energy efficiency. The efficiency of DMCS over other methods is validated when considering a broader range of performance metrics, particularly in scenarios where energy consumption is a key concern.

To further validate the efficiency of DMCS, we conducted additional experiments focusing on energy consumption and scalability. The results reinforce the advantages of DMCS in managing energy usage while maintaining competitive makespan and cost values.

Energy consumption comparison across varying numbers of tasks.

As shown in Fig. 19, DMCS consistently consumes less energy compared to COA as the number of tasks increases. This demonstrates DMCS’s ability to scale efficiently in larger task environments.

Impact of number of fog nodes

The scalability and adaptability of the DMCS approach are further tested by varying the number of fog nodes involved in task processing. This segment of the study evaluates the DMCS against traditional optimization algorithms including PSO, GA, ACO, and Cuckoo Optimization Algorithm (COA), under different configurations of fog nodes ranging from 10 to 50.

The results, as depicted in Fig. 20, illustrate the response of each algorithm to changes in the number of fog nodes in terms of makespan, total cost, energy consumption, and the percentage of deadlines met (DST%). Notably, the experiments demonstrate that an increase in the number of fog nodes generally improves the performance metrics across all algorithms due to the enhanced parallel processing capabilities. However, the efficiency of resource utilization, as reflected in the energy consumption and cost metrics, varies significantly among the algorithms.

Table 9 shows detailed results for the scenario with 50 fog nodes. It is observed that while DMCS tends to have higher cost and energy metrics at higher node counts, it maintains optimal DST%, indicating its effectiveness in deadline adherence without significant performance trade-offs. This suggests that DMCS is particularly effective in environments with dense fog node deployments, optimizing task allocation in a way that balances the load and maintains high service quality.

These findings confirm that the DMCS algorithm not only adapts well to different network topologies but also scales effectively with the increase in computing resources, making it a robust choice for diverse and dynamic fog computing environments.

Performance comparison of DMCS with PSO, GA, ACO, and COA as the number of fog nodes varies, showing metrics of makespan, cost, energy consumption, and DST%.

Impact of number of cloud nodes

The evaluation of the DMCS continues with an investigation into the effects of altering the number of cloud nodes. This part of the analysis compares DMCS with established optimization algorithms-PSO, GA, ACO, and Cuckoo Optimization Algorithm (COA). The experiment varies the number of cloud nodes from 5 to 25 to assess their influence on key performance metrics: makespan, total cost, energy consumption, and the percentage of deadlines met (DST%).

The results showcased in Fig. 21 demonstrate how the number of cloud nodes impacts the performance of scheduling algorithms. An increase in cloud nodes typically enhances computational capacity, which can reduce makespan and improve the ability to meet deadlines, albeit potentially at a higher energy and cost overhead due to increased resource availability.

Specific outcomes for a setup with 25 cloud nodes are detailed in Table 10. The DMCS algorithm, while competitive, exhibits a balanced performance across cost and energy metrics compared to the other algorithms. It manages to maintain a 100% deadline satisfaction rate (DST%), underscoring its effectiveness in ensuring timely task completion.

These results affirm that DMCS is adept at handling varying cloud node densities, efficiently distributing tasks to optimize resource utilization and operational costs, which is crucial for maintaining service quality in scalable cloud computing environments.

Comparative analysis of scheduling performance with varying numbers of cloud nodes, highlighting differences in makespan, cost, energy consumption, and DST% across different algorithms.

Impact of number of iterations

The DMCS algorithm’s robustness is further evaluated by varying the number of iterations in the optimization process. This analysis involves comparing DMCS against well-known optimization algorithms such as PSO, GA, ACO, and Cuckoo Optimization Algorithm (COA). The performance metrics considered include makespan, total cost, energy consumption, and deadline satisfaction percentage (DST%).

Figure 22 illustrates the performance variations as the number of iterations increases from 50 to 250. These variations offer insights into the efficiency and effectiveness of the DMCS in adapting to complex task scheduling environments, particularly in comparison to traditional algorithms.

Cost and Energy Efficiency At 250 iterations, the comparison of total cost and energy consumption highlights how the algorithms scale with increased computational effort. Table 11 shows the performance metrics for 250 iterations. Notably, DMCS demonstrates a competitive balance between cost efficiency and energy consumption, maintaining high DST% across all iterations, which underscores its capability to handle intensive computational tasks without substantial overheads.

Deadline Satisfaction Maintaining a 100% deadline satisfaction rate (DST%) across all tested algorithms signifies the efficiency of these algorithms under varied iterative stresses. DMCS, in particular, showcases its robust scheduling capability, ensuring that all tasks meet their deadlines irrespective of the increased number of iterations.

Impact of varying the number of iterations on the scheduling performance across different algorithms. The comparison highlights differences in makespan, cost, energy consumption, and DST%.

link